Top AI Prompt Engineering Trends in [2026]

Stay ahead with the latest AI prompt engineering trends of 2026. Leverage our expert-driven prompt engineering solutions to unlock smarter automation, enhance AI performance, and gain a competitive edge in your industry.

| Quick Summary – What This Guide Covers

AI prompt engineering in 2026 is a $6.95 billion discipline with its own tools, governance standards, and job market. Here is exactly what this guide covers:

|

Table of Contents

Why Prompt Engineering Is Now a Core Engineering Discipline

Three years ago, prompt engineering was a workaround, something developers used to stop ChatGPT from hallucinating. Today, it sits at the centre of how AI products are designed, built, governed, and scaled.

The numbers confirm it: the global prompt engineering and agent programming tools market is valued at $6.95 billion in 2025 (Fortune Business Insights), growing at a CAGR of over 33% through 2034.

Gartner forecasts 75% of enterprises will deploy generative AI by 2026, with prompt engineering cited as a core competency for successful implementation. On LinkedIn, demand for prompt-related roles spiked +135.8% in 2025 alone.

This shift reflects a deeper change: prompts are no longer one-off instructions. They are programmable, versionable, testable artefacts that shape how AI systems think, respond, and scale. Engineers who understand this distinction are building the most impactful AI products of 2026.

This guide covers every trend, technique, and tool that is actually moving the needle right now. Let’s dive in.

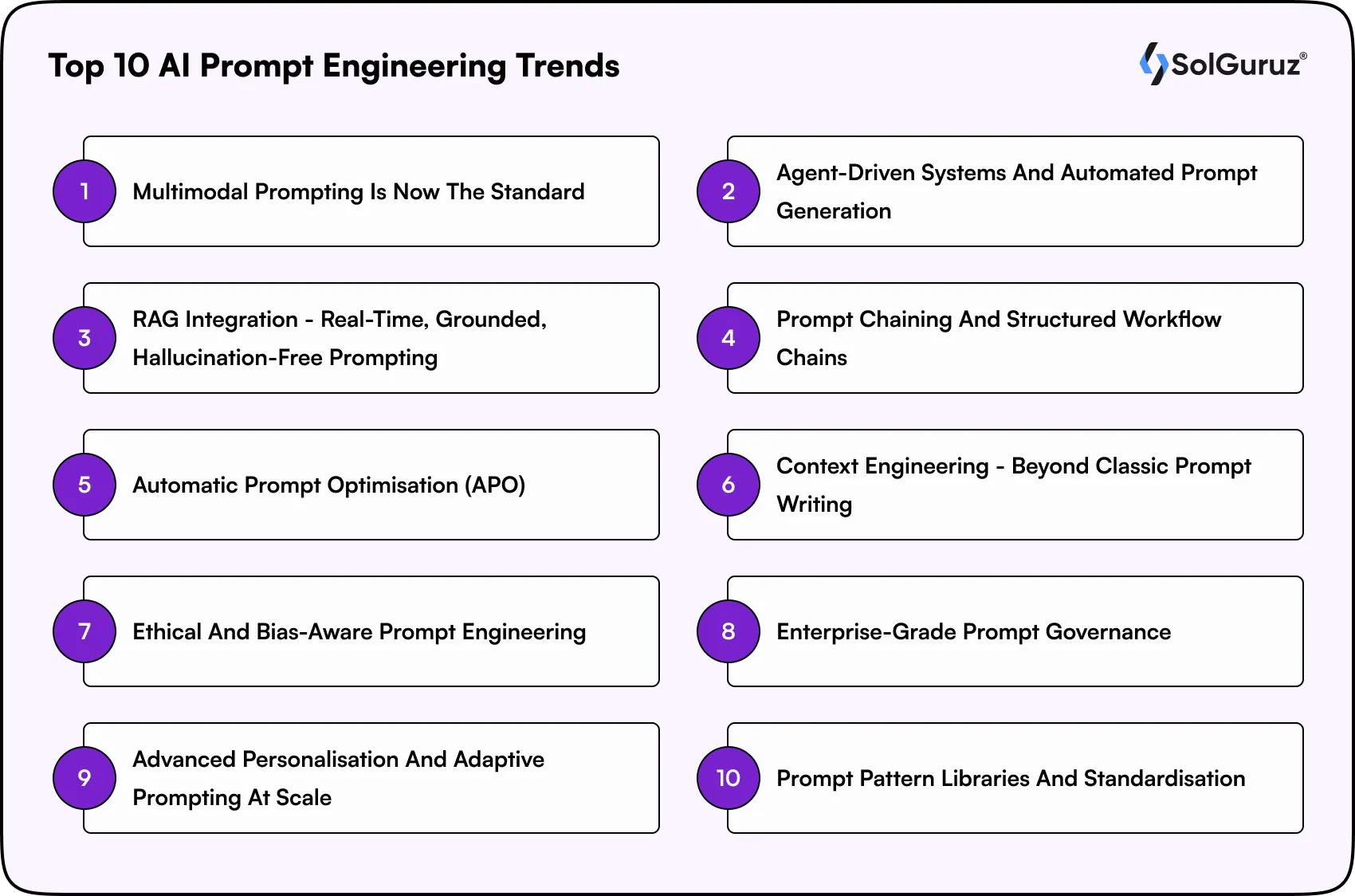

Top 10 AI Prompt Engineering Trends in 2026

These are not predictions; these are trends already deployed in production at leading AI teams globally, confirmed by search data, enterprise reports, and developer surveys.

Trend 1. Multimodal Prompting Is Now the Standard

Until 2024, prompting was a text-only exercise. In 2026, multimodal prompting combining text, images, video, and audio in a single prompt context has become the standard for enterprise AI applications. Models like GPT-4o, Gemini 1.5 Pro, and Claude 3.5 Sonnet natively accept images, PDFs, spreadsheets, and audio as part of the prompt, unlocking workflows that were simply impossible before.

Real-World Applications:

- Medical imaging: Radiologists combine scan images + clinical notes for AI-generated structured diagnostic summaries

- E-commerce: Upload product photos and auto-generate SEO-optimised descriptions, A/B copy variants, and structured data

- Legal review: Scanned contracts as image inputs + text prompts to extract clauses and flag risk deviations

- Code review: Architecture diagrams alongside code snippets for context-aware AI debugging and review

Trend 2. Agent-Driven Systems and Automated Prompt Generation

The next evolution of AI prompt engineering is not humans writing better prompts, it is AI systems generating, refining, and executing their own prompts autonomously. Agent-driven architectures handle complex, multi-step workflows with minimal human input. Tools like AutoGPT, LangGraph, and CrewAI decompose a high-level goal into sub-tasks, prompt specialised sub-agents, and synthesise outputs.

Real-World Applications:

- Goal decomposition: Top-level agent breaks a business goal into data analysis, copy, and testing sub-tasks

- Dynamic prompt generation: Each sub-task receives a context-aware prompt generated at runtime, not pre-written

- Memory and feedback loops: Agents adapt their prompts based on session history and prior step outputs

- Human oversight gates: Approval checkpoints added at critical junctures to catch errors before they compound

Trend 3. RAG Integration – Real-Time, Grounded, Hallucination-Free Prompting

Retrieval-Augmented Generation (RAG) has matured from an experimental technique into a production-grade standard. RAG solves the hallucination problem by retrieving relevant, up-to-date documents at query time and injecting them into the prompt before the model responds. The model answers based on real, current data, not stale or fabricated training knowledge. Virtually every enterprise AI chatbot, knowledge assistant, and document Q&A system now uses RAG as its foundation.

Real-World Applications:

- Hybrid RAG: Dense vector search combined with BM25 keyword retrieval for higher precision

- Multi-vector RAG: Storing summary + full text + key sentences per document to improve retrieval quality

- GraphRAG (Microsoft): Knowledge graphs representing entity relationships for structured, relational retrieval

- Self-RAG: Models that decide when to retrieve versus generate, reducing unnecessary retrieval overhead

Trend 4. Prompt Chaining and Structured Workflow Chains

The era of the single, perfect prompt is over. Prompt chaining, breaking complex tasks into a deliberate sequence of scoped prompts, where each step produces a clean output that feeds the next, is now the standard architecture for reliable AI outputs. Teams using prompt chains report measurably better accuracy, easier debugging, and more consistent quality than single-shot approaches.

Real-World Applications:

- Content pipelines: Research → Outline → Draft → SEO Review → QA as separate, chained steps

- Code generation: Spec → Architecture → Implementation → Test writing → Documentation in sequence

- Customer support: Intent classification → Knowledge retrieval → Response generation → Tone adjustment

- Data analysis: Ingestion → Cleaning → Insight extraction → Visualisation description → Report drafting

Trend 5. Automatic Prompt Optimisation (APO)

APO tools automatically generate prompt variants, evaluate them against a success metric, and iteratively refine the winner without human writing each variation. Adaptive prompt optimisation now automates approximately 30% of prompt refinements that were previously done manually (TechRT, 2026). Teams using APO report faster iteration cycles and measurably better output quality within days rather than weeks.

Real-World Applications:

- DSPy (Stanford): Programmatically optimises prompts using training examples; widely used in enterprise NLP pipelines

- PromptFoo: Open-source. Evaluates hundreds of prompt variants against custom test cases and performance metrics

- Weights & Biases Prompts: Track, compare, and optimise prompt performance alongside model training experiments

- LLM-as-Judge: Use GPT-4 to score and compare outputs of cheaper, faster models on the same prompt task

Trend 6. Context Engineering – Beyond Classic Prompt Writing

In 2026, leading AI teams have moved beyond ‘prompt engineering’ toward ‘context engineering’, the practice of managing everything in the model’s context window as a structured, programmable resource. As Andrej Karpathy described it: the LLM is the CPU, the context window is RAM, and the engineer’s job is to act as the operating system, loading exactly the right code and data for every task.

This shift is especially critical for building AI agents, where multiple components memory, tools, and reasoning must work together seamlessly. Instead of isolated prompts, teams now design systems where context becomes the backbone of agent behaviour and decision-making.

Real-World Applications:

- Context window management: Ordering inputs so the most relevant content always occupies the most attended positions

- Memory architecture: Short-term (session), medium-term (30-day), and long-term (account-level) memory injection

- Tool-use context: Structuring how tool results (search outputs, code execution) are injected back into the prompt

- Context caching: Placing static system instructions first for token efficiency and API cost reduction

Trend 7. Ethical and Bias-Aware Prompt Engineering

As AI systems influence hiring, lending, healthcare diagnoses, and legal decisions, prompt engineers have become the first line of defence against biased or harmful outputs. Ethical prompting, designing prompts with explicit safeguards, fairness constraints, and content guardrails, is no longer optional. Forrester reports 30% of large companies now require formal AI training in 2026, with bias-aware prompting a core component. Structured prompt techniques reduce AI output errors by up to 76% where properly deployed.

Real-World Applications:

- Bias safeguards: Instructions that steer AI away from demographic assumptions or discriminatory language

- Compliance-aware prompting: Healthcare and finance prompts engineered to avoid HIPAA, GDPR, or SEC violations

- Sensitive content guardrails: Explicit instructions specifying what topics to handle carefully or avoid entirely

- Red-teaming: Systematically adversarial testing prompts for failure modes before any production deployment

Trend 8. Enterprise-Grade Prompt Governance

Prompt engineering has moved from personal Notion docs into a full engineering infrastructure. In 2026, enterprises treat prompts like software with version control, access control, audit logs, regression testing, and deployment pipelines. Teams with formal prompt governance frameworks report significantly fewer production incidents from prompt drift or model-update-induced behaviour changes. Around 40% of enterprise applications will include task-specific AI agents by the end of 2026 (TechRT), making governance infrastructure critical.

Real-World Applications:

- Prompt versioning: Git-style version control; roll back instantly when outputs degrade after a model update

- Role-based access control: Only authorised engineers modify production prompts; others work in staging

- Audit logging: Every prompt execution is logged with input, output, model version, latency, and cost

- CI/CD for prompts: Automated regression pipelines that run golden test sets on every prompt change

Trend 9. Advanced Personalisation and Adaptive Prompting at Scale

Static prompts produce static, one-size-fits-all outputs. In 2026, the most successful AI products use prompts that dynamically adapt to individual users’ preferences, history, reading level, and real-time behaviour. Companies like Duolingo, Notion AI, and Intercom have deployed adaptive prompt systems at scale, reporting measurable improvements in task completion, satisfaction scores, and retention. Advanced personalisation moves prompt engineering from a technical function into a direct revenue driver.

Real-World Applications:

User profile injection: Embed user preferences, account history, and persona into the system prompt at runtime

Behavioural context: Adjust tone and depth based on session behaviour, such as dwell time and interaction patterns

Reading level adaptation: Auto-detect and match output complexity to the individual user’s expertise level

Cohort A/B testing: Serve different prompt variants to user segments and measure impact on business metrics

Trend 10. Prompt Pattern Libraries and Standardisation

Prompt engineering is transitioning from a trial-and-error art form into a standardised engineering practice. Communities and enterprise teams are building libraries of proven prompt patterns reusable templates and syntax strategies with documented performance characteristics. These are the design patterns of the AI era. By 2026, most enterprise AI teams will maintain an internal prompt library, significantly reducing onboarding time and improving output consistency across engineering, product, and content functions.

Real-World Applications:

Role-based templates: Pre-built prompts for common personas: legal analyst, medical summariser, code reviewer

Task templates: Reusable prompt structures for summarisation, classification, extraction, and generation tasks

Prompt marketplaces: PromptBase, AIPRM, and internal wikis hosting curated, performance-tested prompt libraries

Cross-model portability: Templates designed to work across GPT-4, Claude, and Gemini with minimal adaptation

Key note: AI prompt engineering in 2026 is no longer about crafting clever inputs; it’s about designing intelligent, adaptive systems that manage context, automate decisions, and scale reliably. The shift toward agentic workflows, RAG, and context engineering signals a move from experimentation to full-scale production infrastructure. Teams that treat prompts as programmable assets, not static text, are the ones building the most resilient and high-performing AI products.

Core Prompt Engineering Techniques Every Practitioner Must Know in [2026]

Trends define the direction. Techniques are the tools you use to get there. These are the foundational and advanced prompting methods actively used in production systems today, from beginner-friendly to cutting-edge.

1. Zero-Shot Prompting – The Starting Point

Zero-shot prompting gives the model a direct instruction with no examples, relying entirely on pre-trained knowledge. It works well for well-defined tasks: summarisation, translation, sentiment classification, and ideation. Always start here before adding complexity. If zero-shot works, there is no reason to use few-shot.

| Zero-Shot Example

Prompt: “Classify the following customer review as Positive, Neutral, or Negative. Review: [text]” Best for: Clear, well-defined tasks where the model already understands the domain well. |

2. Few-Shot Prompting – Teaching by Example

Few-shot prompting provides 2–5 examples of the desired input-output pattern directly in the prompt, teaching the model through demonstration. Research by Brown et al. (2020) shows strong accuracy gains from just 1–2 examples, with diminishing returns beyond 4–5. The key mechanism is pattern completion. The model recognises your structure and continues it. Few-shot consistently outperforms zero-shot for tasks with custom output formats, niche domains, or specific brand voice requirements.

Simple Example of Few-Shot PromptingPrompt: Classify the sentiment of the following sentences: Sentence: “I like this product, it’s amazing!” Sentence: “This is the worst experience I’ve had.” Sentence: “The app works okay, nothing special.” Model Output: What’s happening here? |

In simple terms, few-shot prompting means:

You teach the AI by showing a few examples before asking it to do the task.

Instead of just giving instructions, you provide 2–5 sample inputs and outputs so the model understands the pattern and follows it.

- Zero-shot: “Classify this sentence” → AI guesses

- Few-shot: “Here are 3 examples → now classify this” → AI performs better

3. Chain-of-Thought (CoT) Prompting – Unlocking Reasoning

CoT prompting asks the model to reason step-by-step before producing a final answer. Introduced by Google researchers in 2022 and now a production standard, CoT reduces factual errors by 18–34% on multi-step reasoning benchmarks. It is now the default technique for any task involving maths, logic, legal analysis, medical reasoning, or complex decision-making.

| CoT Type | How to Use It | Best For |

| Zero-Shot CoT | Add ‘Think step by step’ to any prompt | Quick reasoning boost with no examples needed |

| Few-Shot CoT | Provide examples that show the reasoning steps before the answer | Complex maths, logic, multi-step analysis |

| Auto-CoT | Auto-generates CoT demonstrations using input clustering | Enterprise pipelines needing automated reasoning |

| Self-Consistency | Generate multiple reasoning paths, select the most common answer | High-stakes outputs where single reasoning paths can fail |

4. Role Prompting – Aligning Model Behaviour

Role prompting means telling the AI who it should act like before answering. For example:

“You are a senior financial analyst…” or “You are a marketing expert…”

This helps the AI use the right tone, knowledge, and level of detail for the task.

It works especially well for tasks like writing, analysis, or giving advice. For simple tasks like yes/no answers or basic classification, it doesn’t make much difference.

5. Tree-of-Thought (ToT) Prompting – Exploring Multiple Paths

Tree-of-thought extends chain-of-thought by prompting the model to explore multiple reasoning branches or solution paths before selecting the best one, essentially building a decision tree rather than a linear chain. ToT is powerful for complex planning, creative problem-solving, and multi-option scenarios, but it is compute-intensive. Reserve it for high-stakes decisions; it is overkill for routine tasks.

6. Meta-Prompting -Directing the Model’s Reasoning Process

Meta-prompting instructs the model to first outline its analysis framework before executing the task. This produces more structured, auditable outputs and is especially valuable for legal review, risk analysis, medical summarisation, and any domain where the reasoning process matters as much as the conclusion. Combined with Auto-CoT, meta-prompting is one of the highest-impact techniques for complex enterprise workflows.

7. Generated Knowledge Prompting – Priming the Model with Context

Generated knowledge prompting is a powerful generative AI technique where the model first generates relevant background information on a topic, and then uses that context to answer the main question. This is particularly effective for research synthesis, educational content, and content marketing, where priming with relevant knowledge before the main task produces measurably richer, more accurate outputs than a single-step prompt.

| Production Insight from SolGuruz Engineers

In production, a three-step chain (classify intent → gather context → generate response) consistently outperforms a single carefully-crafted mega-prompt, even at higher token cost. The reliability gain is always worth it. Establish a zero-shot baseline first, then add techniques only to fix specific failure modes you observe. Treat prompt engineering like empirical science,ce change one variable at a time, and measure the output quality impact. |

Top Prompt Engineering Tools in [2026]

The tooling ecosystem has matured from a handful of experimental libraries to a rich, production-grade stack. Here are the tools most widely deployed in serious AI systems today, organised by function.

| Category | Tool | What It Does | Cost Model |

| Orchestration | LangChain / LangGraph | Prompt chaining, agentic workflows, RAG pipelines | Open-source / LangSmith paid |

| Prompt Testing | PromptFoo | A/B test prompt variants, regression testing, LLM-as-Judge | Open-source |

| Prompt Optimisation | DSPy (Stanford) | Automatic prompt optimisation using training data | Open-source |

| Observability | LangSmith | Versioning, tracing, monitoring, and debugging | Free tier / paid |

| Vector DB (RAG) | Pinecone | Production-grade vector DB for RAG and semantic search | Pay-as-you-go |

| Vector DB (RAG) | Weaviate / pgvector | Open-source, self-hosted vector DB options | Open-source |

| Prompt Management | PromptLayer | Version control for prompts, team collaboration | Free tier / paid |

| Cost Monitoring | Helicone | LLM API cost tracking, latency, prompt caching | Free tier / paid |

| Enterprise Platform | Maxim AI | End-to-end AI lifecycle: evaluation, simulation, observability | Enterprise |

| Multi-Agent | CrewAI | Role-based multi-agent orchestration | Open-source |

Prompt Engineering Market Size (2026)

The commercial reality of prompt engineering is now impossible to ignore. Here is the data that makes the business case clear.

Market Size Forecast (2025–2034)

| Year | Market Size | Key Growth Driver |

| 2025 | $505 million | Early enterprise GenAI adoption |

| 2026 | $672 million | RAG and multimodal prompting go mainstream |

| 2027 | $893 million | Agentic AI and APO platform commercialisation |

| 2028 | $1.19 billion | Enterprise governance and compliance tooling |

| 2030 | $2.09 billion | Mainstream adoption across all industry verticals |

| 2034 | $6.70 billion | Mature, industrialised, prompt infrastructure |

Remember: Prompt engineering is no longer just a technical skill; it’s becoming a core business capability, driving real ROI as generative AI adoption scales across industries.

Real-World Prompt Engineering Use Cases by Industry [2026]

Prompt engineering is not a theoretical discipline; it is deployed in production across every major vertical. Here is how leading organisations are using it today.

1. Financial Services

91% of financial services firms report implementing generative AI in some capacity (TechRT, 2026). Prompt engineering is what makes those deployments accurate, compliant, and safe.

- Contract analysis: Structured prompt chains review thousands of legal agreements per day, identifying clauses that deviate from standard templates and flagging risk by severity.

- Compliance documentation: RAG-augmented prompts cross-reference transactions against regulatory rules and auto-generate compliance narrative reports for review.

- Fraud detection narratives: AI-generated structured reports on flagged transactions, incorporating transaction history, user behaviour patterns, and rule-matching evidence.

2. Healthcare and Life Sciences

Over 70% of healthcare organisations have implemented or are pursuing generative AI capabilities (TechRT, 2026). Prompt engineering governs accuracy and safety in every use case.

- Clinical documentation: Physicians dictate notes; RAG-augmented prompts cross-reference patient history and generate structured clinical summaries in FHIR-compatible formats.

- Drug discovery: Few-shot prompts extract and synthesise findings from thousands of academic papers, compressing months of literature review into days.

- Patient education: Adaptive prompting personalises health information based on patient literacy level, primary language, and medical history at the individual scale.

3. Software Development and Engineering

84% of developers now use or plan to use AI tools in development workflows (SQ Magazine, 2025). Prompt engineering is what separates useful AI coding assistants from unreliable ones.

- Spec-Driven Development: Structured specification prompts guide Claude Code, Cursor, and GitHub Copilot to generate code that matches architecture rules, security constraints, and API contracts before implementation begins.

- Code review automation: Chained prompts classify the change type, apply the relevant review checklist, and generate structured review comments in the team’s style.

- Documentation generation: Few-shot prompts trained on existing documentation style guides generate API docs, README files, and inline comments that match the team’s voice and standards.

4. E-Commerce and Retail

Generative AI in retail is expected to deliver $400–600 billion in industry value (McKinsey). Much of this flows through prompt-engineered content and personalisation workflows.

- Product catalogue at scale: Multimodal prompts ingest product images and generate SEO-optimised descriptions, meta tags, and structured data across thousands of SKUs simultaneously.

- Personalised recommendations: Adaptive prompts inject user browsing history, purchase patterns, and seasonal context to generate hyper-personalised product recommendations at scale.

- Customer service automation: RAG-augmented prompts retrieve product specs, inventory, return policies, and order details in real time to answer queries accurately without hallucination.

How to Build Your Prompt Engineering Capability in 2026: Team Roadmap

Knowing the trends is step one. Acting on them systematically is what separates high-performing AI teams from everyone else. Here is a practical roadmap organised by team size and engineering maturity.

| Team Stage | Priority Actions | Tools to Deploy First |

| Solo developer/freelancer | Master CoT + few-shot fundamentals. Build a personal prompt library. Use PromptFoo to A/B test systematically. | PromptFoo, DSPy, LangChain basics |

| Early startup (1–10 engineers) | Implement prompt versioning in Git. Build your first RAG pipeline. Experiment with LangGraph for agentic workflows. | LangSmith, LangGraph, Pinecone, LangChain |

| Growth startup (10–50 engineers) | Formalise prompt governance. Introduce APO. Deploy multimodal prompts. Establish a golden test set for regression testing. | LangSmith, PromptLayer, PromptFoo, Pinecone |

| Mid-size org (50–200 engineers) | Build a shared prompt library. Add role-based access control for production prompts. Deploy LLM-as-Judge for automated QA. | Maxim AI or LangSmith Enterprise, internal prompt library |

| Enterprise (200+ engineers) | Full prompt lifecycle management. Multi-model routing. Compliance-aware prompting with PII guardrails. Adaptive personalisation at scale. | Maxim AI, Helicone, custom governance layer, Pinecone |

Conclusion: Prompt Engineering in 2026 Is Infrastructure, Not a Trick

Prompt engineering in 2026 is no longer a shortcut; it’s a foundational layer of modern AI systems. With advancements like RAG, agent-based workflows, and context engineering, prompts are now designed with structure and intent.

This shift also brings the need for governance, standardisation, and reliability. Prompts are being treated more like production code, where consistency, testing, and scalability matter just as much as creativity.

Teams that recognise this early are building faster and delivering better results. That’s the approach SolGuruz follows, focusing on practical, scalable AI solutions rather than one-off experiments.

FAQs

1. What is the most important AI prompt engineering trend in 2026?

Multimodal prompting and context engineering are the two most impactful shifts in 2026. Multimodal prompting unlocks entirely new workflow categories by combining text, image, and audio inputs in a single prompt. Context engineering represents the broader discipline emerging from prompt engineering, treating the entire model context window as programmable, manageable infrastructure rather than a simple text input.

2. What is the difference between prompt engineering and context engineering?

Prompt engineering traditionally focuses on crafting the text instruction sent to an AI model. Context engineering is the broader practice of managing everything the model sees, system prompts, retrieved documents, memory, tool outputs, conversation history, and user profile data as a holistic, structured resource. In 2026, the most sophisticated AI teams operate at the context engineering level.

3. What is RAG, and why is every AI team deploying it in 2026?

RAG (Retrieval-Augmented Generation) solves the hallucination problem. Instead of relying solely on training knowledge, RAG retrieves relevant, current documents at query time and injects them into the prompt. The model responds based on real data, not stale or fabricated training content. In 2026, RAG is a production standard for any AI system requiring accurate, grounded outputs.

4. How is prompt chaining different from writing a single detailed prompt?

Prompt chaining breaks a complex task into a deliberate sequence of scoped prompts, where each step produces a clean output that feeds the next. A single mega-prompt tries to do everything at once, reducing the model's focus and increasing error rates. Chaining produces more accurate, auditable, and debuggable outputs at the cost of more tokens and slightly higher latency. For any task that matters, the trade-off is always worth it.

5. What tools do prompt engineers use most in 2026?

The most commonly deployed tools are: LangChain and LangGraph for prompt orchestration and agent workflows; DSPy and PromptFoo for automatic prompt optimisation; Pinecone and Weaviate for RAG vector storage; LangSmith for prompt versioning and tracing; and Helicone for API cost monitoring. For enterprise-scale teams, Maxim AI provides an end-to-end prompt lifecycle platform covering evaluation, simulation, and observability.

6. Is prompt engineering a sustainable and well-paying career in 2026?

Yes. The median total pay for prompt engineers in the US is $126,000/year (Glassdoor, December 2025), with senior specialists and staff-level roles at top AI companies earning $200,000–$270,000+. Demand for prompt-related roles grew +135.8% in 2025, and 68% of firms now provide formal prompt engineering training. The role is evolving from standalone 'Prompt Engineer' titles into embedded AI workflow expertise across engineering, product, and data science roles.

7. What is the prompt engineering market worth in 2026?

The global prompt engineering market is projected to reach $672 million in 2026, growing from $505 million in 2025 at a CAGR of 33.27% (Fortune Business Insights). The broader prompt engineering and agent programming tools market, including agentic AI tooling, is valued at $6.95 billion in 2025.

8. What is the biggest challenge with advanced prompt engineering in production?

For most teams, the hardest challenge is operational, not technical. Maintaining prompt quality across model updates, preventing prompt drift, building a governance culture, and creating systematic evaluation pipelines are harder problems than writing a good prompt. Teams that solve governance and evaluation first consistently outperform teams that focus solely on technique.

Written by

Lokesh Dudhat

Lokesh Dudhat is the Co-Founder and CTO of SolGuruz, with 15+ years of hands-on experience in full-stack and product engineering. He spent over a decade building native applications across iPhone, iPad, Apple Watch, and Apple TV ecosystems before expanding into backend systems, Angular, Node.js, Python, AI software and solutions, and cloud architecture. As CTO, Lokesh defines and enforces engineering standards, architecture practices, and DevOps maturity across all delivery teams. He is actively involved in system design reviews, scalability planning, code quality frameworks, and platform architecture decisions for complex products. He works closely with product teams and enterprise clients to design resilient, maintainable, and performance-driven systems. His writing focuses on software architecture, headless CMS systems, backend engineering, scalability patterns, and engineering best practices.

From Insight to Action

Insights define intent. Execution defines results. Understand how we deliver with structure, collaborate through partnerships, and how our guidebooks help leaders make better product decisions.

Want AI Prompts That Actually Drive Results?

SolGuruz prompt engineers build custom prompt systems for your product, AI stack, or team workflow.

Strict NDA

Trusted by Startups & Enterprises Worldwide

Flexible Engagement Models

1 Week Risk-Free Trial