What is a Vector Database? How It Works, Use Cases & Tools [2026]

This blog discusses what a vector database is, how it works, and why it is essential for modern AI applications. It covers key concepts like embeddings, ANN search, real-world use cases, top tools in 2026, and how to choose the right solution based on your needs.

Key Takeaways

|

The AI infrastructure landscape looked very different in 2023. Purpose-built vector databases like Pinecone were a novelty, and only the most cutting-edge teams were using them. Fast-forward to early 2026, and vector search has become a standard capability embedded into PostgreSQL, MongoDB Atlas, Oracle AI Database 26ai, and every major hyperscaler. The standalone vector database market, valued at $2.58 billion in 2025, is projected to reach $17.9 billion by 2034, but the bigger story is that the technology is going mainstream.

Whether you are building your first RAG chatbot or scaling an agentic AI system to millions of users, understanding how vector databases work and where the market is heading is now a core skill for any software team. This guide provides a comprehensive overview.

What Is a Vector Database?

A vector database is a specialized database designed to store, index, and search vector embeddings, numerical representations of unstructured data like text, images, audio, and code.

When an AI model (such as OpenAI’s text-embedding-3-small, Google’s Gecko, or open-source models like sentence-transformers) processes content, it converts it into a vector, a list of numbers that captures its meaning. Each number represents a different dimension of that meaning.

Vectors that are numerically close to each other represent semantically similar content, even if the actual words or formats are completely different.

One-line definition |

This capability -searching by meaning rather than keyword is what powers modern AI applications: RAG chatbots, semantic search engines, recommendation systems, AI agent memory, and multimodal search across images, audio, and video.

In 2026, vector search is no longer locked inside specialist databases. It has been absorbed as a native capability by relational and document databases, cloud platforms, and enterprise search engines. The concept remains the same, but the infrastructure options have multiplied.

The 2026 State of the Market: What Has Changed

The vector database landscape has evolved rapidly between 2024 and 2026. Understanding this shift helps teams make smarter architectural decisions today.

Vectors Are Now a Data Type, Not Just a Database Category

Until 2023–2024, most teams adopted dedicated vector databases alongside their existing data stack. By 2026, that separation is shrinking.

A widely discussed industry shift can be summarized as: vectors are no longer a separate system; they are becoming a native data type inside modern databases.

1. PostgreSQL

Now widely used as a foundation for GenAI applications, PostgreSQL supports vector search through extensions like pgvector and pgvectorscale. These add support for indexing methods such as HNSW and DiskANN for high-performance similarity search.

2. MongoDB Atlas Vector Search

MongoDB Atlas now treats vectors as a native field type, allowing developers to add semantic search without introducing a separate database system.

3. Oracle AI Database 26ai

Oracle AI Database 26ai (launched in October 2025) builds on earlier AI-focused releases and introduces Unified Hybrid Vector Search, combining vector search with relational, JSON, graph, and spatial queries in a single system.

4. Cloud Providers (Hyperscalers)

Major platforms like Google AlloyDB AI, Amazon OpenSearch Service, and Azure AI Search now offer fully managed vector search built directly into their ecosystems. This reduces the need for standalone vector databases in many use cases.

Key Note: The biggest shift in 2026 is not just better vector search performance, but architectural consolidation. Instead of adding a separate vector database, many teams are extending their existing databases with vector capabilities, keeping structured and unstructured data in one place, simplifying infrastructure, and reducing operational complexity.

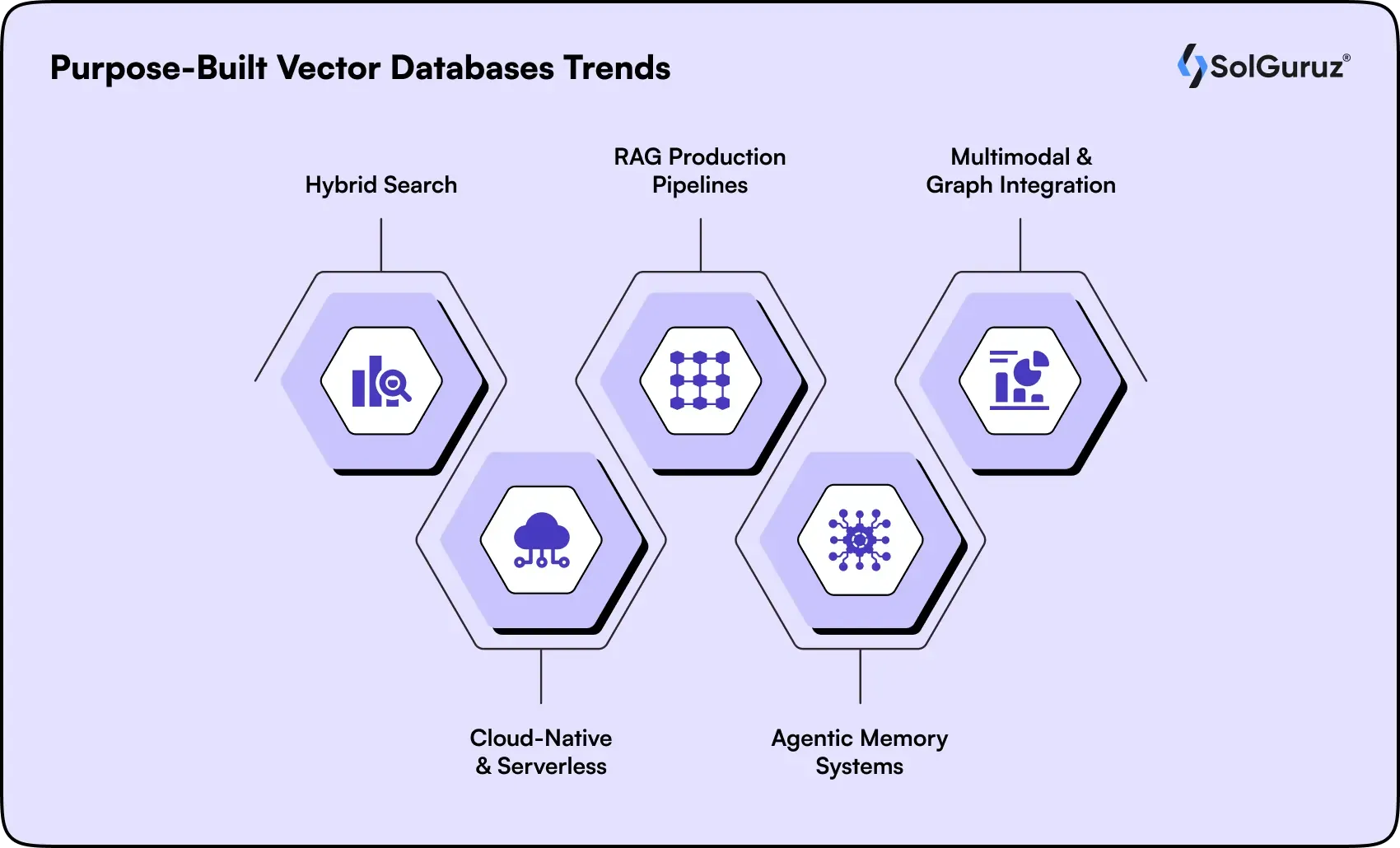

Purpose-Built Vector Databases Trends [2025-2026]

While general-purpose databases are rapidly adding vector capabilities, purpose-built vector databases continue to evolve with advanced features designed specifically for high-performance AI workloads, large-scale similarity search, and production-grade GenAI systems.

1. Hybrid Search

Hybrid search has become the production standard, combining dense vector (semantic) and sparse keyword (BM25) methods for superior retrieval accuracy over dense-only approaches used in 2024.

2. Cloud-Native & Serverless

Cloud-managed vector services like Pinecone now dominate, handling scalability while traditional self-hosted databases struggle with high-dimensional workloads.

3. RAG Production Pipelines

Vector databases enable robust Retrieval-Augmented Generation (RAG) pipelines with multi-modal data support and integration into enterprise search systems.

4. Agentic Memory Systems

AI agents leverage vector stores for three memory layers: short-term working memory, episodic session history, and semantic knowledge essential for persistent, adaptive workflows.

5. Multimodal & Graph Integration

2025-2026 sees multimodal embeddings (text+image+audio) in shared vector spaces, plus graph-enhanced retrieval combining relationships with semantic similarity for richer context.

How Does a Vector Database Work?

Here is the core workflow when a vector database is used inside an AI application:

- Content is embedded: An AI embedding model converts raw content, a document, image, customer record, or code snippet into a high-dimensional vector (typically 768 to 3,072 dimensions).

- Vectors are stored: The vector is stored alongside its metadata (document ID, source, timestamp, category) in the vector database.

- Query is embedded: When a user submits a query, the same embedding model converts it into a vector.

- ANN search runs: The database uses Approximate Nearest Neighbor (ANN) algorithms to find the stored vectors most similar to the query vector.

Results are returned: The matched vectors, with their original content and metadata, are returned, often within 10–50 milliseconds, even across billions of records.

What Is Approximate Nearest Neighbor (ANN) Search?

Exact nearest neighbor search across millions of high-dimensional vectors would require comparing every vector to the query computationally infeasible at scale. ANN algorithms find the closest results with a small, acceptable trade-off in precision, delivering massive speed gains. The three dominant ANN approaches in 2026:

- HNSW (Hierarchical Navigable Small World): Graph-based approach with excellent recall and speed. Used by Weaviate, Qdrant, pgvector, and Milvus. The default choice for most production workloads.

- IVF (Inverted File Index): Clusters vectors into partitions and searches only relevant ones. Used in FAISS and Pinecone. Good for very large-scale datasets.

- StreamingDiskANN: A newer algorithm optimized for disk-based storage, enabling vector search on datasets too large to fit in memory. Gaining adoption in pgvectorscale and cloud-native databases.

Insight: The power of vector databases lies in combining fast ANN search with rich embeddings, enabling AI systems to retrieve relevant information based on meaning, not exact matches, at a massive scale and low latency.

Similarity Metrics

| Metric | Best For | Key Property |

| Cosine Similarity | Text, NLP, document search | Measures the angle between vectors, ignores magnitude |

| Euclidean Distance (L2) | Image search, spatial data | Straight-line distance between two vector points |

| Dot Product | Recommendation systems | Measures alignment, fast but sensitive to vector magnitude |

| Hamming Distance | Binary embeddings, fingerprinting | Count of positions where two binary vectors differ |

Insight: The power of vector databases lies in combining fast ANN search with rich embeddings, enabling AI systems to retrieve relevant information based on meaning, not exact matches, at a massive scale and low latency.

Vector Database vs. Traditional Database

Traditional relational databases are designed to handle precise, structured queries like: “Find all customers where country = ‘India’ and plan = ‘Pro’.”

Vector databases, on the other hand, are built for semantic search. They can answer queries like: “Find documents related to contract disputes,” even if those exact words don’t appear in the content.

In short, traditional databases match exact values, while vector databases match meaning.

| Feature | Relational (SQL) | NoSQL | Vector Database |

| Query Type | Exact match (WHERE clauses) | Pattern / key-value queries | Semantic similarity (ANN search) |

| Data Format | Structured tables (rows & columns) | JSON / document-based | High-dimensional embeddings + metadata |

| Primary Use | Transactions, analytics, reporting | Flexible schema applications | AI, semantic search, RAG, agents |

| Search Capability | Keyword / exact match | Index-based lookup | Meaning-based similarity |

| Scales for AI | Limited without extensions | Moderate (with added vector support) | Purpose-built for AI workloads |

| 2026 Reality | Supports vectors via extensions (e.g., pgvector, AlloyDB AI) | Native vector support (e.g., MongoDB Atlas) | Specialized systems (e.g., Pinecone, Weaviate, Qdrant, Milvus) |

2026 Reality Check

In 2026, the lines have blurred. PostgreSQL with pgvector can handle moderate-scale AI workloads without a separate vector database. MongoDB Atlas Vector Search lets document database users add semantic search natively. The decision is no longer 'SQL vs vector', it is about which combination of capabilities fits your scale and existing stack.

Vector Database vs. Standalone Vector Index (FAISS)

Teams often start with FAISS (Facebook AI Similarity Search) for prototyping. It is fast, free, and easy to spin up. But FAISS is a library, not a database. It lacks everything you need to run AI applications reliably in production.

| Capability | FAISS (Standalone Index) | Vector Database (e.g., Qdrant, Pinecone) |

| Vector Database (e.g., Qdrant, Pinecone) | Manual/limited | Full insert, update, and delete support |

| Metadata Filtering | Not supported | Built-in with complex filtering |

| Real-Time Updates | Requires full re-indexing | Supports live updates |

| Persistence | File-based only | File-based only |

| Scalability | Single-node, manual setup | Horizontal scaling, serverless options |

| Access Control | None | API keys, RBAC, namespace isolation |

| Hybrid Search | Not supported | Supports BM25 + vector search (e.g., Weaviate, Qdrant) |

| Best For | Research, quick prototypes | Production AI applications |

Note: Start with FAISS for rapid prototyping. Scale to vector databases for production reliability.

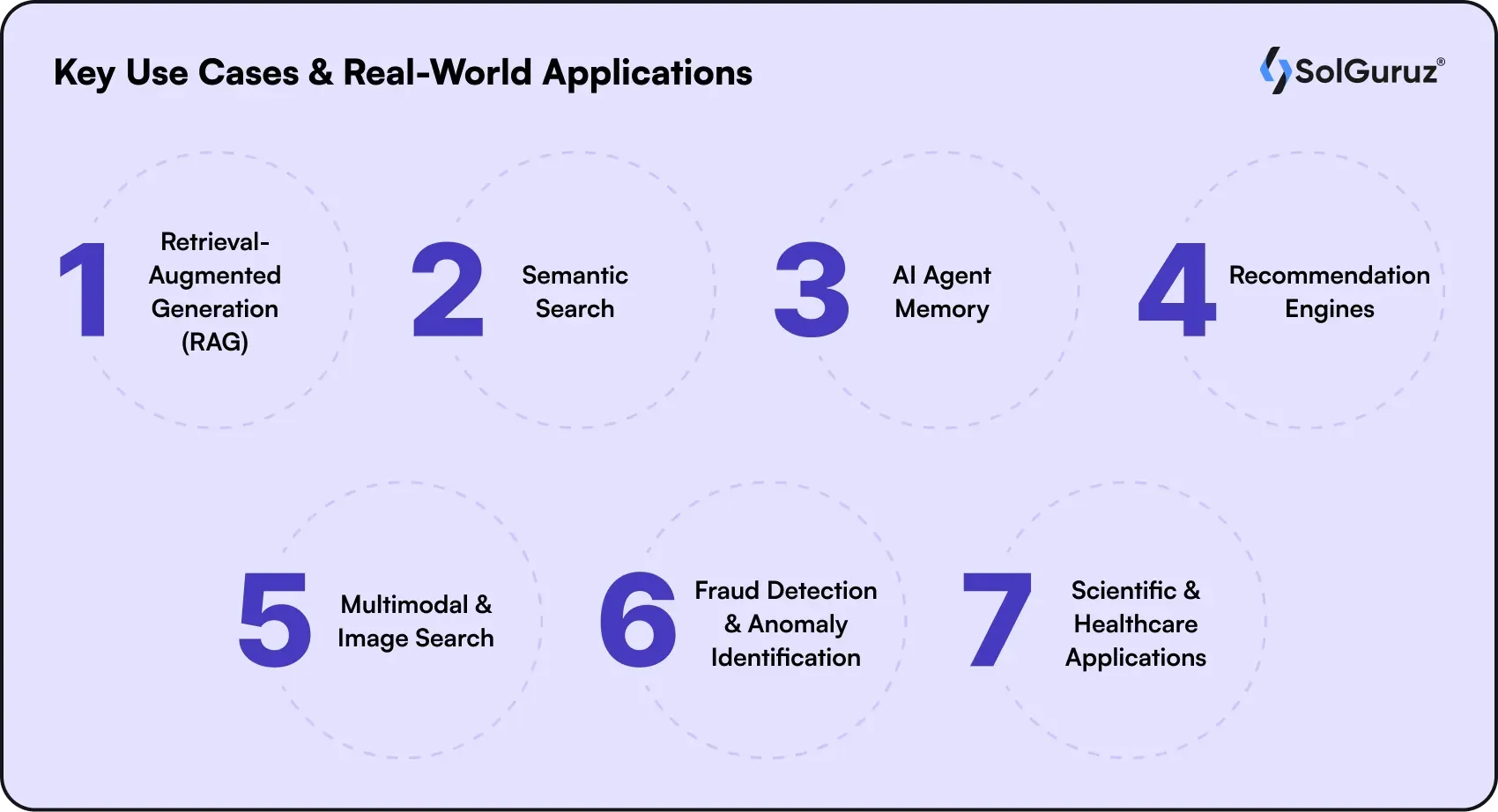

Key Use Cases & Real-World Applications

As vector databases mature from experimental tools to core infrastructure, their real value becomes clear in production use cases, powering everything from intelligent search to autonomous AI systems across industries.

1. Retrieval-Augmented Generation (RAG)

RAG remains the dominant production use case for vector databases in 2026. Your knowledge base, product documentation, or enterprise data is chunked, embedded, and stored. When a user asks a question, the most relevant chunks are retrieved and passed to an LLM as grounding context, dramatically reducing hallucinations.

Important 2026 update: Classic RAG (single-source, point-in-time retrieval) is being augmented by multi-source RAG and agentic memory systems to enable adaptive, stateful AI workflows. Vector databases serve both patterns.

Real-World Example

A healthcare SaaS company embeds 200,000 clinical protocol documents and stores them in Weaviate. When a clinician asks, 'What is the standard dosing protocol for vancomycin in ICU patients?', the system retrieves the 5 most relevant protocol chunks and passes them to GPT-4o, generating a grounded, cited answer rather than a hallucinated one.

2. Semantic Search

Traditional keyword search fails when users phrase queries differently from how the content is written. Semantic search closes that gap, returning results based on meaning, not word overlap. In 2026, hybrid search (vector + BM25 keyword) has become the production standard, outperforming pure-vector approaches for most document retrieval workloads.

3. AI Agent Memory

One of the biggest emerging use cases in 2025–2026. Autonomous AI agents require memory across sessions to operate effectively. Vector databases provide three memory layers: short-term working memory (recent context), episodic memory (session history), and long-term semantic memory (general knowledge). Systems like Mem0, Letta, and Memobase are building agent memory architectures on top of vector stores.

4. Recommendation Engines

E-commerce, media, and SaaS platforms encode user behavior, item attributes, and interaction history as embeddings. The closest vectors to a user's preference embedding become recommendations. Shopify's 2026 search blends sparse keyword signals with dense embeddings for best-in-class product relevance, a widely studied example of hybrid retrieval in production.

5. Multimodal & Image Search

With multimodal embedding models (like OpenAI CLIP, Google ImageBind) that embed text and images into a shared vector space, you can now search a product catalog by uploading an image or find relevant video frames using a text description. This was experimental in 2023; it is a production pattern in 2026.

6. Fraud Detection & Anomaly Identification

Transaction patterns, user behaviors, and access logs are embedded and stored. Statistical outliers - vectors far from all established clusters flag potential fraud or system anomalies. Fintech companies use this pattern to catch duplicate charges, detect account takeovers, and enforce PCI-DSS compliance at the specification level.

7. Scientific & Healthcare Applications

Drug discovery and genomics research produce extremely high-dimensional biological and chemical data. Vector embeddings of molecular structures, protein sequences, and genomic data allow researchers to find similar compounds or sequences at a speed not previously possible. Companies like InstaDeep are using vector databases to accelerate biological modeling and drug candidate discovery.

Also read: What Is Retrieval Augmented Generation Rag

Top Vector Database Tools in 2026

The vector database ecosystem has matured significantly. Here is a structured overview of the leading options as of early 2026:

Purpose-Built Vector Databases

| Tool | Type | Best For | 2026 Notable Update |

| Pinecone | Managed / Serverless | Production RAG, enterprise scale | Multi-cloud (AWS, GCP, Azure), 5-10ms latency at scale |

| Weaviate | Open-source + cloud | Hybrid search, multimodal, enterprise | Used in production by NVIDIA, IBM, and Salesforce |

| Qdrant | Open-source (Rust) + cloud | High-performance, filtered search, self-hosted | 2025: ACORN algorithm, MMR, multilingual full-text; 2026 roadmap: 4-bit quantization |

| Milvus / Zilliz | Open-source + managed cloud | Billion-scale deployments, GPU workloads | GPU acceleration, distributed querying, and Kafka integration |

| Chroma | Open-source | Local dev, LLM prototyping | 2025 Rust rewrite: 4x faster writes/queries vs original Python |

Integrated / General-Purpose Databases with Vector Support

As vector databases mature from experimental tools to core infrastructure, their real value becomes clear in production use cases powering everything from intelligent search to autonomous AI systems across industries.

| Tool | Base Technology | Best For | Vector Capability |

| pgvector + pgvectorscale | PostgreSQL extension | Existing PostgreSQL users, moderate scale | HNSW + StreamingDiskANN; optimized for high-performance similarity search |

| MongoDB Atlas Vector Search | MongoDB (document database) | Existing MongoDB users adding AI features | Vectors as a native field type with aggregation pipeline support |

| Oracle AI Database 26ai | Oracle (enterprise database) | Large enterprises, regulated industries | Unified Hybrid Vector Search (vectors + relational + JSON + graph in one query) |

| AWS OpenSearch | Managed search service | AWS-native teams, Bedrock integration | k-NN vector search with integration into Amazon Bedrock |

| Elasticsearch | Managed search platform | Enterprise search with hybrid retrieval | BM25 + vector hybrid search with tunable relevance |

| LanceDB | Embedded / serverless | Edge computing, IoT, and desktop applications | Runs inside the application, no separate server required |

SolGuruz Team Recommendation for 2026

- For teams starting a new AI product: use pgvector/Chroma locally, then evaluate whether your scale justifies a dedicated vector database.

- For production at tens of millions of vectors: Pinecone Serverless (easiest ops) or Qdrant self-hosted (best cost/performance).

- For existing PostgreSQL teams at moderate scale: pgvectorscale is now a serious production option that avoids new infrastructure. For enterprise-regulated industries: Weaviate or Qdrant with self-hosted deployment on your own cloud.

How to Choose the Number 1 Right Vector Database

The right tool depends on your team's existing stack, data scale, deployment constraints, and how much infrastructure you want to manage. Use this framework:

| Your Situation | Recommended Approach |

| Individual developer/prototype | Chroma (pip install, zero setup) or pgvector |

| Already using PostgreSQL (any team size) | pgvector + pgvectorscale to add vector search without new infrastructure |

| Already using MongoDB | MongoDB Atlas Vector Search (vectors as a native field type) |

| Small team (2–10), greenfield AI product | Pinecone Serverless (minimal ops, pay-per-query) |

| Medium team (10–50), performance-focused | Qdrant (self-hosted) or Weaviate Cloud |

| Large enterprise (50+), complex systems | Milvus (Zilliz) or Pinecone Enterprise |

| AWS-native team | Amazon OpenSearch Service + Bedrock integration |

| Regulated industry (fintech, healthcare, legal) | Qdrant or Weaviate (self-hosted for full data control) |

| Edge / IoT / offline application | LanceDB (embedded, no server required) |

| Need hybrid search (vector + keyword) | Weaviate or Qdrant (BM25 + vector support) |

Key evaluation criteria to assess before committing:

- Recall at your target QPS: Ask vendors for benchmarks at your expected query volume. A database with 95% recall at 100 QPS may drop to 80% recall at 10,000 QPS.

- Metadata filtering performance: Many teams discover that filtered vector search is significantly slower than unfiltered. Test with your actual filter patterns. Qdrant's ACORN algorithm specifically addresses this.

- Hybrid search quality: If your content has strong keyword signals (product names, codes, proper nouns), pure vector search underperforms. Prefer databases with native BM25 + vector hybrid support.

- Deployment model: Managed cloud (lowest ops, higher cost at scale) vs. self-hosted (more control, requires infra team) vs. embedded (no server, for edge use cases).

- Vendor lock-in risk: Open-source systems (Qdrant, Weaviate, Milvus, pgvector) let you move between cloud providers. Managed proprietary services, trade portability for convenience.

Also read: Foundation models.

Vector Database Quickstart: Embed, Store, Query

Here is the standard workflow for integrating a vector database into an AI application, from embedding generation to retrieval, using Python examples.

Step 1: Generate Embeddings

Embed your content (OpenAI example) from openai import OpenAI client = OpenAI() def embed(text: str) -> list[float]: response = client.embeddings.create( model='text-embedding-3-small', # 1,536 dims input=text ) return response.data[0].embedding |

Step 2: Store Vectors (Qdrant example)

Upsert with metadata from qdrant_client import QdrantClient from qdrant_client.models import PointStruct client = QdrantClient('localhost', port=6333) client.upsert( collection_name='knowledge_base', points=[ PointStruct( id=1, vector=embed('Vector databases store and search embeddings'), payload={ 'source': 'internal-wiki', 'team': 'engineering', 'updated': '2026-01' } ) ] ) |

Step 3: Query with Metadata Filtering

Semantic search with filter from qdrant_client.models import Filter, FieldCondition, MatchValue results = client.search( collection_name='knowledge_base', query_vector=embed('how do vector databases handle scale?'), query_filter=Filter( must=[ FieldCondition(key='team', match=MatchValue(value='engineering')) ] ), limit=5 ) for hit in results: print(hit.payload['source'], round(hit.score, 3)) |

This three-step pattern, embed, store, query, is the foundation of every RAG pipeline, semantic search system, and agentic memory architecture. The vector database sits between your raw data and your AI layer, acting as a queryable, semantic memory.

Challenges & Limitations

Vector databases are powerful, but teams consistently run into the same practical challenges. Knowing them in advance saves weeks of debugging.

- Embedding model quality drives retrieval quality: A weak embedding model will produce poor results regardless of which database you use. Invest time in evaluating and benchmarking embedding models for your specific content type and language.

- Chunking strategy is harder than it looks: For RAG, how you split the document,s chunk size, overlap, and splitting logic significantly affect what gets retrieved. There is no universal best practice. Semantic chunking (splitting at natural content boundaries) often outperforms fixed-size chunking.

- Recall degrades with aggressive metadata filtering: Filtered vector search reduces the search space and can significantly lower recall. Qdrant's ACORN algorithm was developed specifically to address this. Test your filter-heavy queries with realistic data volumes.

- Cost at billion-scale: Storing and querying billions of vectors is expensive, especially on managed cloud services. Quantization (reducing vector precision with minimal accuracy loss) and serverless architectures help, but require careful cost modeling.

- Vector databases are not transactional systems: They are optimized for approximate similarity search, not ACID transactions, complex joins, or aggregations. Production AI systems almost always need both a vector store and a relational database.

- Evaluation is difficult: Unlike SQL queries that return exact results, vector search returns approximate results with a relevance score. Measuring retrieval quality requires dedicated evaluation metrics (NDCG, MRR, recall@k) and labeled test datasets, a step many teams skip until retrieval quality becomes a visible problem.

Conclusion & Next Steps

Vector databases form AI's semantic memory layer, enabling RAG pipelines, agent systems, and multimodal search at production scale. SolGuruz helps teams implement battle-tested vector database architectures, from pgvector prototypes to Pinecone/Qdrant deployments that handle real business loads.

Choose right for 2026: pgvector for existing PostgreSQL stacks, Pinecone Serverless for zero-ops scaling, Qdrant for cost-effective high-performance workloads.

FAQs

1. What is a vector database in simple terms?

A vector database stores data as numerical arrays (vectors) that represent the meaning of content, then lets you search by semantic similarity finding what is conceptually closest to your query, not just what shares the same words. It is the memory layer that makes AI applications understand and retrieve information the way humans do.

2. What is the difference between a vector database and an SQL database?

A SQL database stores structured data in rows and columns and returns exact matches. A vector database stores high-dimensional embeddings and returns approximate nearest neighbor matches based on semantic similarity. In 2026, the distinction is blurring; PostgreSQL with pgvector now handles both. For moderate-scale AI workloads, you may not need a separate vector database at all.

3. What is the best vector database in 2026?

There is no single best option; it depends on your context. Pinecone is easiest to get started with for managed, serverless production deployments. Qdrant is the top performer for self-hosted high-performance workloads. Weaviate is strongest for hybrid search and multimodal data. pgvectorscale is the best option if you are already on PostgreSQL and do not want new infrastructure. Chroma is best for local prototyping.

4. Is a separate vector database still necessary in 2026?

Not always. With PostgreSQL's pgvectorscale benchmarking at 471 QPS at 99% recall on 50M vectors, and MongoDB Atlas, Oracle 26ai, and all major hyperscalers now offering native vector search, many teams can add vector capabilities to their existing database without a separate system. A dedicated vector database becomes necessary when you need to scale beyond hundreds of millions of vectors, need sub-10ms latency at thousands of QPS, or require advanced features like graph-enhanced retrieval that generalist databases do not yet support.

5. What is a vector database used for in AI?

The primary use cases in 2026 are: RAG (grounding LLM responses in your data), semantic search (finding content by meaning not keywords), AI agent memory (persistent context across sessions), recommendation engines (finding similar products, content, or users), multimodal search (cross-modal search across text, images, audio), and anomaly/fraud detection (identifying behavioral outliers).

6. What is hybrid search, and why is it important in 2026?

Hybrid search combines dense vector similarity search with sparse keyword search (BM25). By 2026, it will have become the production standard for document retrieval because it outperforms pure-vector approaches, especially when content contains important proper nouns, product codes, or domain-specific terms that a vector model may not embed well. Weaviate and Qdrant both support native hybrid search.

7. Does ChatGPT use a vector database?

OpenAI uses vector-based retrieval internally for features like memory and document analysis in ChatGPT. Developers building on top of GPT-4 or other OpenAI models commonly use Pinecone, Weaviate, Qdrant, or pgvector as the vector store layer in their RAG pipelines.

8. How large is the vector database market?

The global vector database market was valued at $2.58 billion in 2025 and is projected to grow to $17.9 billion by 2034 at a CAGR of approximately 24%. The US accounts for around 81% of the 2025 market revenue. Notably, Snowflake and Databricks alone spent $1.25 billion acquiring PostgreSQL companies with vector capabilities in 2025, signaling where enterprise investment is heading.

Looking for an AI Development Partner?

SolGuruz helps you build reliable, production-ready AI solutions - from LLM apps and AI agents to end-to-end AI product development.

Strict NDA

Trusted by Startups & Enterprises Worldwide

Flexible Engagement Models

1 Week Risk-Free Trial

Next-Gen AI Development Services

As a leading AI development agency, we build intelligent, scalable solutions - from LLM apps to AI agents and automation workflows. Our AI development services help modern businesses upgrade their products, streamline operations, and launch powerful AI-driven experiences faster.

Why SolGuruz Is the #1 AI Development Company?

Most teams can build AI features. We build AI that moves your business forward.

As a trusted AI development agency, we don’t just offer AI software development services. We combine strategy, engineering, and product thinking to deliver solutions that are practical, scalable, and aligned with real business outcomes - not just hype.

Why Global Brands Choose SolGuruz as Their AI Development Company:

Business - First Approach

We always begin by understanding what you're really trying to achieve, like automating any mundane task, improving decision-making processes, or personalizing user experiences. Whatever it is, we will make sure to build an AI solution that strictly meets your business goals and not just any latest technology.

Custom AI Development (No Templates, No Generic Models)

Every business is unique, and so is its workflow, data, and challenges. That's why we don't believe in using templates or ready-made models. Instead, what we do is design your AI solution from scratch, specifically for your needs, so that you get exactly what works for your business.

Fast Delivery With Proven Engineering Processes

We know your time matters. That's why we follow a solid, well-tested delivery process. Our developers follow AI-Assisted Software Development principles to move fast and stay flexible to make changes. Moreover, we always keep you posted at every step of the AI software development process.

Senior AI Engineers & Product Experts

When you work with us, you're teaming up with experienced AI engineers, data scientists, and designers who've delivered real results across industries. And they are not just technically strong but actually know how to turn complex ideas into working products that are clean, efficient, and user-friendly.

Transparent, Reliable, and Easy Collaboration

From day one, we keep clear expectations on timelines, take feedback positively, and share regular check-ins. So that you'll always know how we are progressing and how it's going.

Whether you’re modernizing a legacy system or launching a new AI-powered product, our AI engineers and product team help you design, develop, and deploy solutions that deliver real business value.